Removed by mod

This account is being kept for the posterity, but it won’t see further activity past February.

If you want to contact me, I’m at /u/[email protected]

Removed by mod

Sorry for the double reply. Here’s a practical idea: what if the mods of this comm contacted lemmy.ml’s admins? Ideally doing two things:

Among the admins I think that Nutomic would be the best to contact, given the github thread.

You’re talking about your thread about Mahou Shoujo ni Akogarete, right? It’s still in the modlog for me, even in private mode. I don’t think that they removed the entry.

Lunix sucks so much that it got stuck into the version 2 for years.

Removed by mod

In my opinion, the migration is sensible because:

For context, I encourage people to check this discussion in the “join Lemmy” site github. Have in mind that both of the Lemmy developers in that discussion are also admins of the lemmy.ml instance, and they clearly disagree if the instance in question should be considered as “hosting CSAM” or not.

I think that this community should migrate, but for a different reason: topic.

The topic of lemmy.ml is privacy and free-as-freedom software. Most other content here is off-topic, including anime. It was fine when it was just lemmy.ml and lemmygrad.ml, as you’d have no other place to discuss anime in Lemmy, but now the situation is different.

And ideally, communities should be managed/moderated/administered by people who know well the topic of the community.

Aaaaah. I really, really wanted to complain about the excessive amount of keys.

(My comment above is partially a joke - don’t take it too seriously. Even if a new key was added it would be a bit more clutter, but not that big of a deal.)

The source that I’ve linked mentions semantic embedding; so does further literature on the internet. However, the operations are still being performed with the vectors resulting from the tokens themselves, with said embedding playing a secondary role.

This is evident for example through excerpts like

The token embeddings map a token ID to a fixed-size vector with some semantic meaning of the tokens. These brings some interesting properties: similar tokens will have a similar embedding (in other words, calculating the cosine similarity between two embeddings will give us a good idea of how similar the tokens are).

Emphasis mine. A similar conclusion (that the LLM is still handling the tokens, not their meaning) can be reached by analysing the hallucinations that your typical LLM bot outputs, and asking why that hallu is there.

What I’m proposing is deeper than that. It’s to use the input tokens (i.e. morphemes) only to retrieve the sememes (units of meaning; further info here) that they’re conveying, then discard the tokens themselves, and perform the operations solely on the sememes. Then for the output you translate the sememes obtained by the transformer into morphemes=tokens again.

I believe that this would have two big benefits:

And it might be an additional layer, but the whole approach is considerably simpler than what’s being done currently - pretending that the tokens themselves have some intrinsic value, then playing whack-a-mole with situations where the token and the contextually assigned value (by the human using the LLM) differ.

[This could even go deeper, handling a pragmatic layer beyond the tokens/morphemes and the units of meaning/sememes. It would be closer to what @[email protected] understood from my other comment, as it would then deal with the intent of the utterance.]

Not quite. I’m focusing on chatbots like Bard, ChatGPT and the likes, and their technology (LLM, or large language model).

At the core those LLMs work like this: they pick words, split them into “tokens”, and then perform a few operations on those tokens, across multiple layers. But at the end of the day they still work with the words themselves, not with the meaning being encoded by those words.

What I want is an LLM that assigns multiple meanings for those words, and performs the operations above on the meaning itself. In other words the LLM would actually understand you, not just chain words.

Complexity does not mean sophistication when it comes to AI and never has and to treat it as such is just a forceful way to make your ideas come true without putting in the real effort.

It’s a bit off-topic, but what I really want is a language model that assigns semantic values to the tokens, and handles those values instead of directly working with the tokens themselves. That would be probably far less complex than current state-of-art LLMs, but way more sophisticated, and require far less data for “training”.

creating a label and checking the skip invoice box

That works great too, specially if you want to use less foolproof filters. Or even a mix of both strategies.

Oh “great”, more crap between Ctrl and Alt.

[Grumpy grandpa] In my times, the space row only had five keys! And we did more than those youngsters do with eight, now nine keys!

Thank you! It’s working now.

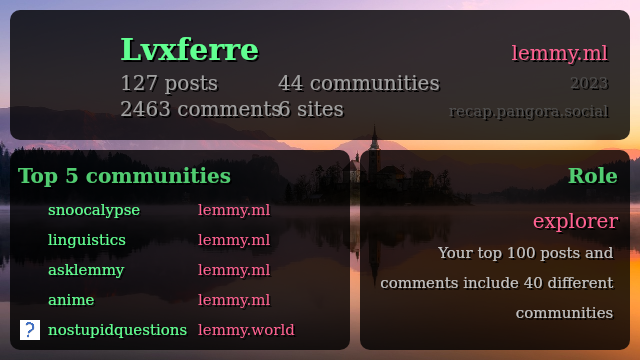

It’s giving me an error, “Error Finding Entity // Make sure you spelled the entity correctly and that it exists!”, when I use my username for lemmy.ml; curiously it works well when I do it for my beehaw.org account.

If you want, you could use GMail filters to delete those emails automatically. Here’s how:

Important: never use as a filter anything that legitimate users might reasonably say. Only things that you’re fairly certain to come from a spammer.

EDIT: I repeated two steps without noticing it. My bad.

Got someone in my family with diabetes type I, and we’ve been hearing about the “magical” solution coming “soon” since she was diagnosed with it, in her childhood, around 30 years ago.

As such I’ll keep what I see as a healthy amount of scepticism towards this piece of news.

Ah, got it. My bad. Yeah, not providing anything is even lazier, and unlike “lazy” bash scripts it leaves the user clueless.

The settlement is right at the border of what would be controlled by the Inca government, two millenniums later. It shows that there’s some decent access to the region from the west than you’d be led to believe, with the Andes in the way.

As such, if they find other cities further east, I’m predicting that, culturally speaking, they’ll resemble nothing this one; even if they happen to be roughly the same size.

“If you don’t have chicha, any small thing will do.” (reference to a certain song)

Serious now. Potentially yucca too - it grows right next door, and if they got maize from North America then they likely traded for crops.