Listen there’s definitely enough carbon in the body to boost that into a steel sword.

If we can make diamonds out of corpses, we can make steel.

Listen there’s definitely enough carbon in the body to boost that into a steel sword.

If we can make diamonds out of corpses, we can make steel.

Can’t wait for FOSS brain implants, it would still be hellish but a fun kind of hellish.

I want someone like Linus Torvalds to verbally abuse someone for not understanding basic computational neuroscience.

You would like Global Workspace Theory, basically says your consciousness is the result of components of the brain broadcasting their information to the whole.

I also like Integrated Information Theory which measures the conscious experience of a system by how integrated it is, which means that you can’t reduce the system to the sum of it’s parts without losing the emergent properties.

Yeah I love Foundry, but I’m convinced the DM needs technical knowledge to use it. I ran a server for non tech savvy DM and it was like working customer service.

With plenty of investment you can get the tabletop to be almost exactly what you want it to be, and for a popular system like 5e you can make it as automated as a Baldurs Gate game. You just need to download a lot of modules to get there and customize a lot of settings. Without that it just becomes a less intuitive Roll20.

And I must stress from experience, never offer to host/troubleshoot a server for someone else, especially if the DM likes to complain or can’t handle minor technical setbacks.

I’m curious, is there actually so many 42’s in the system? (more than 69 sounds unlikely)

What if the LLM is getting tripped up because 42 is always referred to as the answer to “the Ultimate Question of Life, the Universe, and Everything”.

So you ask it a question like give a number between 1-100, it answers 42 because that’s the answer to “Everything”, according to it’s training data.

Something similar happened to Gemini. Google discouraged Gemini from giving unsafe advice because it’s unethical. Then Gemini refused to answer questions about C++ because it’s considered “unsafe” (referring to memory management). But Gemini thinks C++ is “unsafe” (the normal meaning), therefore it’s unethical. It’s like those jailbreak tricks but from its own training set.

deleted by creator

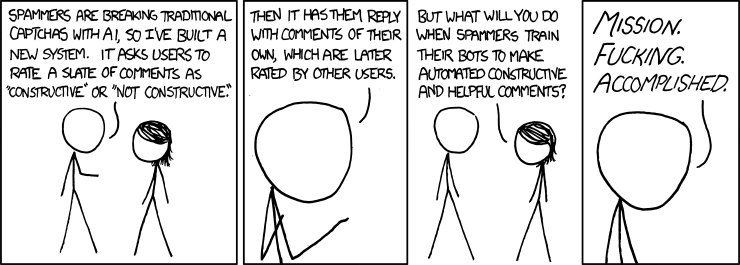

Alt Text: And what about all the people who won’t be able to join the community because they’re terrible at making helpful and constructive co-- … oh.

If you want barebones Windows I’d suggest you cough cough obtain Windows 10 LTSC.

It’s got most the bloatware cut out, you just have to reenable the old style picture viewer.

Though when I eventually make a new PC, I’m probably just gonna use Linux Mint because I hear running Windows games/software isn’t nearly as bad nowadays, thanks Steam.

Japan has a similar worldview to Americans because there’s been multiple points in history where we brute forced our ways on them, conveniently at times where their old ways were losing faith.

Forcing Japans borders open while they remained isolated with outdated weaponry, and the end of WW2.

Capitalism was drilled into their culture until it’s teeth sunk in and they had their economic boom.

From what I know, most raptors had feathers and that’s where birds came from.

The broader group of theropods, including the T-Rex, had a precursor to feathers literally called “Dinofuzz”.

All other kinds of dinosaurs I believe are actually scaly like we thought.

Just to clarify I am implying the medical provider would be the one sued. I didn’t think ChatGPT would be in the wrong.

ChatGPT has just done a great job revealing how lazy and poorly thought out people are all over.

Typically for the AI to do anything useful you’d copy and paste the medical records into it, which would be patient data.

Technically you could expunge enough data to keep it inline with HIPPA, but if there’s more people careless enough not to proofread their paper, then I doubt those people would prep the data correctly.

Come to think of it, I wonder if using ChatGPT violates HIPPA because it sends the patient data to OpenAI?

I smell a lawsuit.

May I introduce you to our lord and savior JavaScript?

I thought you were being too cynical because plenty of plants evolved this technique but then I realized because of AI I have absolutely no idea if they’re real or not, unless I spend time that I don’t have on researching it.

This specific instance probably.

But the point is soo much of history ignores the female perspective (or the non-european perspective). Sometimes intentionally like all the female scientists that contribute to foundational studies and don’t get their name on the published paper.

And this is really damaging; I have a family member that legitimately believes that european-descent men are the smartest throughout history (when I brought up the Islamic Golden Age as a counter example he accused it of being propaganda).

American schools are so bad at teaching diverse history. So many still struggle with the basic truths about Columbus and the Natives.

That’s simultaneously funny and depressing.

The way I’ve come to understand it is that LLMs are intelligent in the same way your subconscious is intelligent.

It works off of kneejerk “this feels right” logic, that’s why images look like dreams, realistic until you examine further.

We all have a kneejerk responses to situations and questions, but the difference is we filter that through our conscious mind, to apply long-term thinking and our own choices into the mix.

LLMs just keep getting better at the “this feels right” stage, which is why completely novel or niche situations can still trip it up; because it hasn’t developed enough “reflexes” for that problem yet.

Not enough negative mass

Don’t know if this has been fixed but Gemini was telling people it’s unethical to teach people C++ or memory management.

Because it’s considered “memory unsafe” but Gemini took it literally and considered it to unsafe to teach.